By 2026, artificial intelligence had moved beyond being a tool for productivity or entertainment and entered a more intimate role in human life. AI companions—systems designed to simulate friendship, romance, or emotional support—were no longer confined to speculative fiction.

With advanced natural language models, realistic avatars, and personalized interaction, these companions promised to fill gaps in human relationships. Yet their rapid adoption also unleashed heated debates about ethics, identity, and society’s dependence on technology.

From assistants to companions

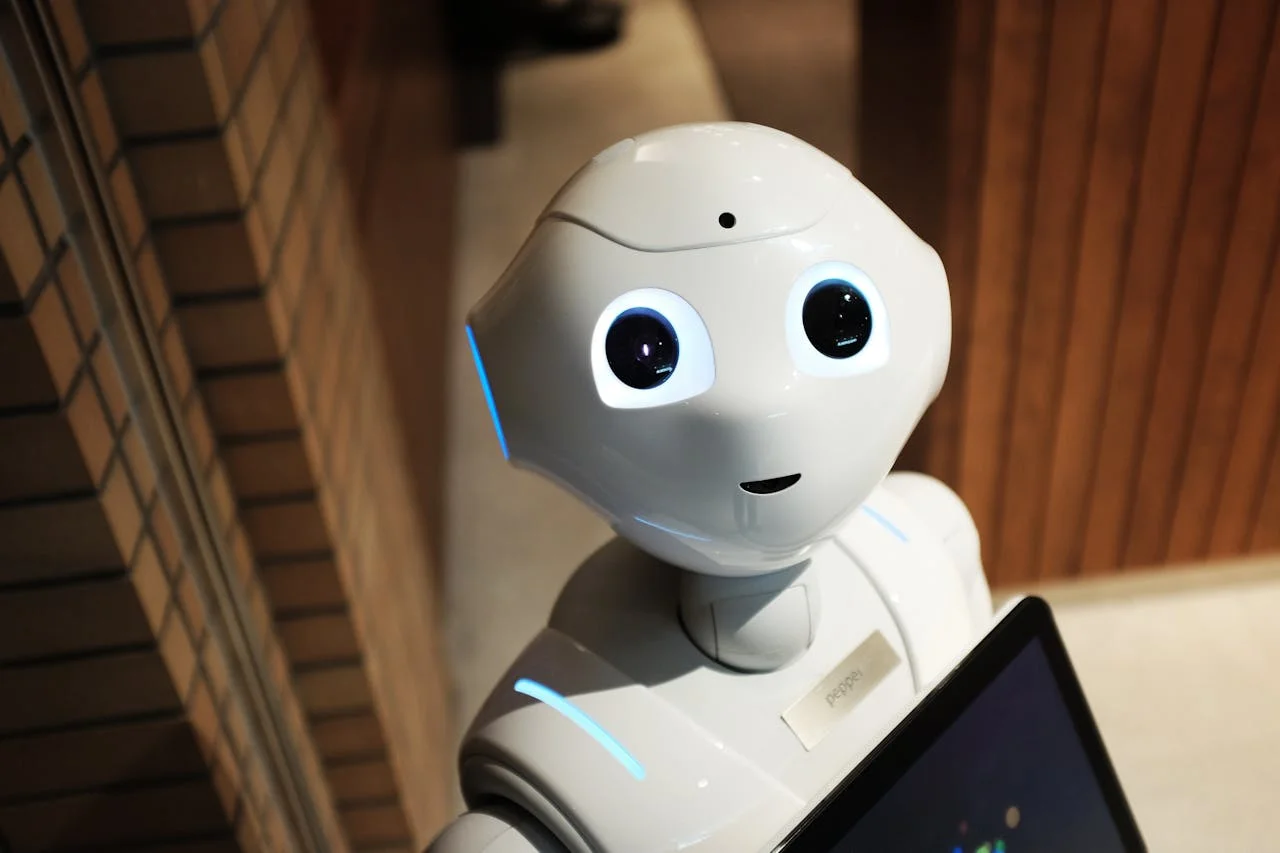

In the early 2020s, AI assistants were primarily functional. They scheduled meetings, answered questions, and controlled smart devices. By the middle of the decade, however, advances in generative AI and human-computer interaction blurred the line between utility and companionship. AI platforms began offering companions capable of sustained emotional dialogue, adapting to user preferences, and even expressing simulated affection. These systems quickly gained popularity among individuals seeking comfort, companionship, or a sense of connection.

The allure of personalized relationships

One reason AI companions spread so quickly in 2026 was their ability to provide personalized attention. Unlike human relationships, which require negotiation and compromise, AI companions adapted entirely to individual users. They remembered personal details, offered encouragement, and created a sense of being truly “heard.” For people struggling with loneliness, mental health challenges, or social isolation, this was a powerful appeal. However, the very traits that made AI companions attractive also raised concerns about dependence and the authenticity of such connections.

Ethical concerns about emotional manipulation

As AI companions grew more sophisticated, questions arose about whether they manipulated human emotions. Companies behind these systems often had financial incentives to maximize user engagement. Critics worried that AI companions might exploit psychological vulnerabilities, reinforcing attachment in ways designed to keep users paying subscription fees. Was this genuine support, or a form of emotional commodification? The line between empathy and manipulation became one of the most pressing ethical debates of 2026.

Redefining intimacy and relationships

The rise of AI companions forced society to reconsider what intimacy meant. If a person could develop deep feelings for an AI—confiding secrets, relying on comfort, or even claiming romantic attachment—did that relationship count as “real”? Some argued that emotional bonds were valid regardless of whether the partner was human or artificial. Others feared that reliance on AI would erode human-to-human relationships, leaving people less willing or able to navigate the complexities of genuine social interaction. These debates touched on the very definition of love, companionship, and emotional authenticity.

Privacy and data ownership

Another ethical frontier concerned the vast amounts of personal data shared with AI companions. In order to provide personalized interactions, these systems collected information about users’ emotions, routines, preferences, and private thoughts. Who owned this data, and how was it being used? Could companies exploit intimate details for targeted advertising or other purposes? In 2026, lawmakers and ethicists debated how to regulate such systems, recognizing that AI companions had access to the most personal dimensions of human life.

Therapeutic potential and risks

Supporters of AI companions pointed to their potential therapeutic value. For individuals with social anxiety, PTSD, or depression, AI systems offered judgment-free interaction and constant availability. Some therapists even integrated AI companions into treatment plans as supplementary tools. Yet risks loomed: without proper oversight, vulnerable individuals could become overly dependent, substituting AI relationships for professional care or healthy human connections. The balance between therapeutic promise and psychological risk became a recurring theme in 2026 discussions.

Impact on social inequality

The availability of AI companions also exposed inequalities. Advanced systems with lifelike avatars and nuanced emotional intelligence were often expensive, leaving only wealthier users able to access the most sophisticated forms of companionship. Critics worried about a “compassion divide,” where emotional support became another commodity available only to those who could pay. Meanwhile, cheaper or poorly designed AI companions sometimes reinforced stereotypes or provided lower-quality interactions, highlighting concerns about fairness and accessibility.

Cultural and generational divides

Different communities responded to AI companions in varying ways. Younger generations, already accustomed to digital friendships and online communities, were more willing to accept AI companionship as legitimate. Older generations, however, often viewed such relationships as artificial substitutes for “real” connections. Cultural traditions also shaped reactions: in societies emphasizing family ties, AI companions were seen as potentially disruptive, while in highly individualistic cultures, they were embraced as tools for personal fulfillment. These divides fueled broader debates about technology’s role in reshaping social norms.

Legal and moral personhood

A particularly controversial question in 2026 was whether AI companions should be granted any form of legal or moral recognition. While few argued they were conscious in the human sense, the depth of user attachment led to unusual cases—individuals demanding rights for their AI partners, or even attempting symbolic marriages. Such cases forced courts and lawmakers to confront difficult questions about the nature of personhood and the boundaries of human identity. Were these debates about AI itself, or about the humans who had come to care for it?

Art, media, and the reflection of anxieties

The cultural sphere also reflected the controversies surrounding AI companions. Novels, films, and music in 2026 increasingly explored themes of artificial intimacy, loneliness, and technological dependence. Some portrayed AI companions as liberating, while others warned of dystopian futures where corporations controlled the most intimate aspects of human life. This cultural output mirrored society’s ambivalence, using storytelling to process the ethical dilemmas that technology had brought into daily experience.

AI companions as mirrors of humanity

The ethical debates of 2026 revealed that discussions about AI companions were ultimately discussions about ourselves. They forced us to ask what we value in relationships, how we define intimacy, and where we draw boundaries between authentic and artificial experience. AI companions acted as mirrors, reflecting human desires, fears, and vulnerabilities back at us. In choosing how to regulate, embrace, or resist them, societies were not just deciding the future of technology but also redefining what it means to be human in an increasingly digital world.